CMS

Basics

Navigation as a Sense

The blog post is a walkthrough of an interaction design project from the Interactivity course at Malmö University. The brief was to design for know-how activities using servo motors as our medium.

I’ve always been drawn to immersive experiences where the boundary between user, object, and activity dissolves. Technologies like AR, VR, and haptics are making this merging of experiencer and experience more natural than ever.

Take cycling: at first you’re acutely aware of every movement, but once you “know how,” balance, pedaling, and steering fade from awareness, you just ride. Philosopher Hubert Dreyfus (and later Heyer, 2018) calls this state coping. It made me wonder: what if we disrupted that fluidity? What if we shifted back into conscious awareness? Could it enrich the experience instead of slowing it down?

This tension between unconscious coping and deliberate, bodily-aware interaction became the foundation of our exploration, leading us to investigate vibration as a new form of communication.

From Servos to Signals

It began practically. My collaborator Jesper and I were designing a navigation system embedded in bike handlebars, one that could communicate turns and direction without requiring the rider to look at a screen.

We started with a spare handlebar we found in the design lab and a set of servos. Servos aren't made to vibrate, they're made to rotate to precise positions. Our first instinct was to attach a stick to the servo and let it strike the handlebar on each cycle. Too thick. We tried a copper rod next. Still barely perceptible…

Video 1: experimenting with servos

The breakthrough came when we stopped thinking about the servo's designed function and started thinking about what else it could do. Instead of using the servo to create a vibration, we used the servo as the vibrating body, pushing it directly against the handlebar. It worked surprisingly well.

It's a small thing, but it's worth sitting with: the component we'd been trying to use as a tool was the tool. We were just looking at it the wrong way.

Beyond biking: vibration as language

Once we had something we could feel, our supervisor Jens encouraged us to detach the concept from cycling entirely. The real question wasn't how do we tell someone to turn left, it was can vibration carry meaning at all, and how rich can that meaning get?

Phones already use vibration. So do smartwatches. Morse code is technically a vibration language. But these are all fairly binary, a buzz for a notification, a pattern for an alarm. What we were after was something more like a vocabulary. A set of patterns with consistent, transferable meanings. Signals that could be learned, or better yet, felt instinctively.

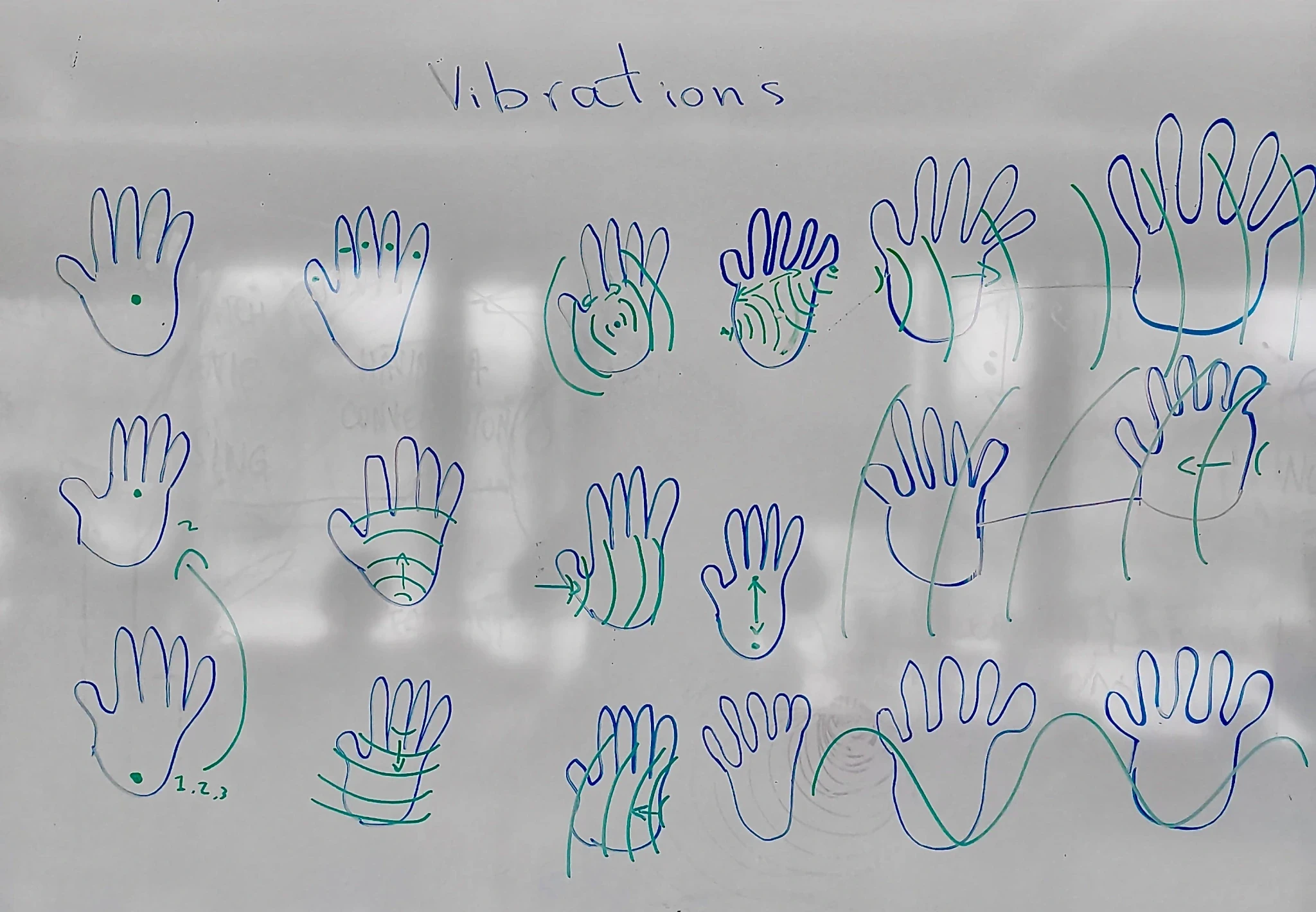

Figure 1: Some ways to communicate using vibration

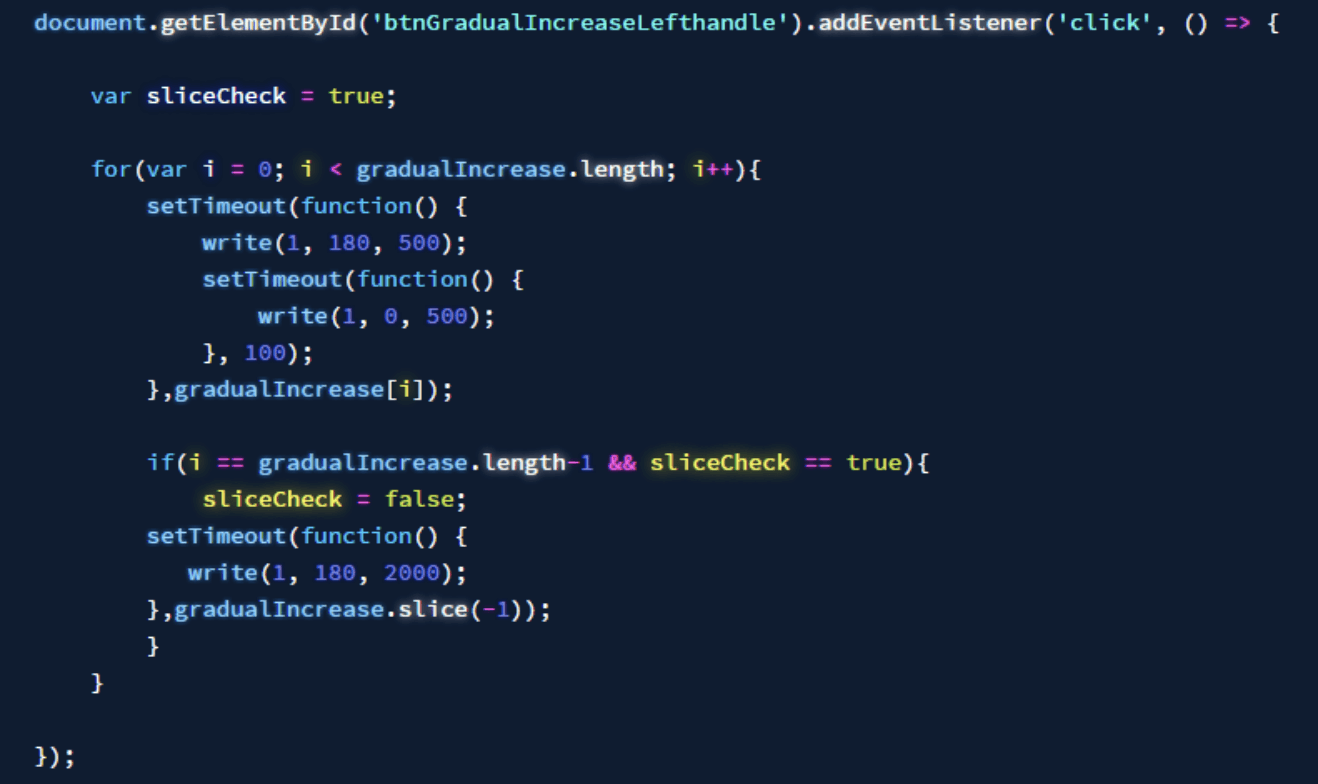

We coded a base system for manipulating timing, delays, speed, direction, and synchronisation across multiple servos. Once the foundation was there, designing new patterns became a matter of tweaking parameters.

Video 2: examples of vibration patterns

Gradual Increase: intensity rising over time. We anchored it to the bike initially: the feeling of getting closer to a destination, like the mounting beeps of a parking sensor translated into touch. But the pattern itself is abstract. It could mean progress, proximity, success.

Gradual Decrease: the reversal. Here's where Jesper and I diverged. I preferred a clean mirror of the increase pattern. He added a short buzz at the beginning as a kind of warning prefix. Both felt distinct. Both communicated something. That small disagreement was its own discovery: tiny changes in rhythm alter meaning. A fraction of a second. A single pulse added to the front. And you're saying something different.

Alternating Waves: with multiple servos across both handlebars, we could create sensations that swept across the hands rather than pulsing in one spot. A wave moving left to right prompted the body to lean right, almost instinctively. No interpretation required. The body just followed.

Figure 2: the code to manage the vibration

What Neuroplasticity says

David Eagleman has spent years asking a version of this same question from a neuroscience angle. He calls it sensory sensory addition. The premise is that the brain doesn't care much about which organ delivers information, only that the information arrives in a consistent and decodable pattern. Given enough repetition, the brain learns to interpret new input streams as if they were native.

In one of Eagleman's most striking experiments, people wore vests covered in vibrating motors, each mapped to a different frequency of sound. Deaf participants, after training, began to perceive speech through their torsos. The skin became a hearing organ. Not metaphorically neurologically.

The same logic underlies work by Paul Bach-y-Rita, who decades earlier showed that blind participants could navigate spaces and recognise objects through cameras feeding data into tactile arrays on their tongues or backs. With practice, they stopped perceiving the stimulation as touch. They perceived it as vision.

This is neuroplasticity doing what it does best: rewriting the map. The brain allocates cortical real estate based on use, not birthright. A sense you feed consistently will grow.

What we were doing with handlebars and servos was a naive but earnest version of the same idea. If you always feel a leftward wave before a left turn, and the body always responds to it, at some point the brain stops asking what does this mean and just responds. The signal becomes fluent.

Building a vocabulary

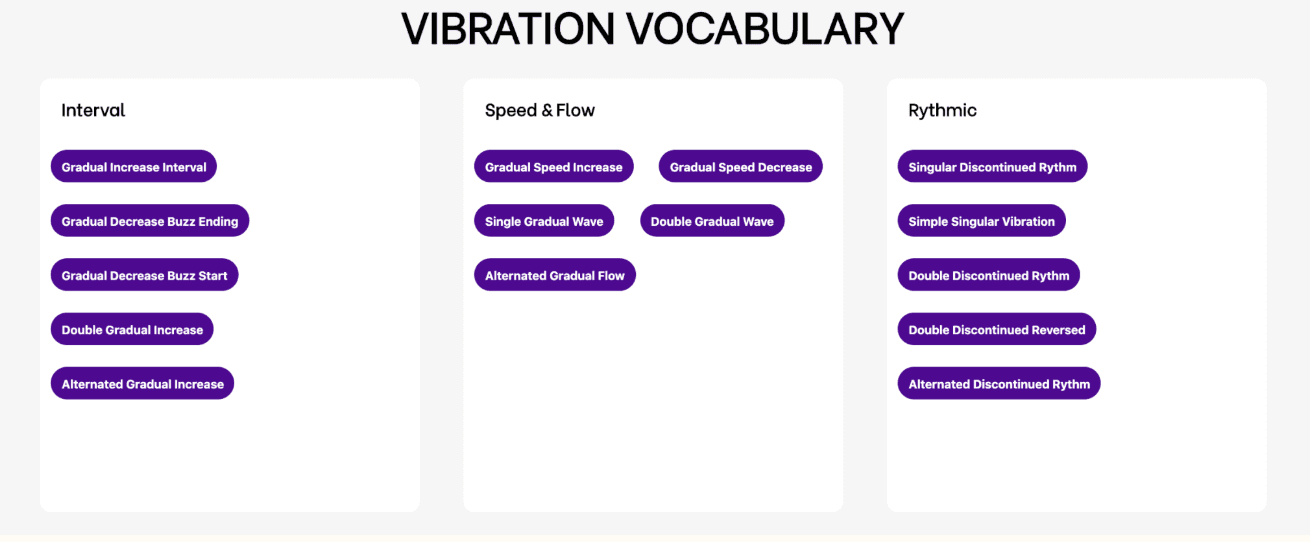

We organised our growing library of patterns into three categories:

Interval Vibrations: recognisable patterns with pauses built in, like the Gradual Increase Interval. These feel abstract and transferable. They don't presuppose a context.

Speed & Flow Vibrations: continuous waves or accelerations that trigger instinctive responses. The body processes these before the mind interprets them. A sweeping wave from left to right across both hands is less a message and more a nudge.

Rhythmic Vibrations: composed sequences that function more like words. Distinct, learnable, but requiring some degree of prior exposure. Like the difference between a language's grammar and its vocabulary.

The distinction matters because it maps onto something real about how humans learn new sensory channels. Some patterns can be understood immediately, by anyone, they borrow from physical intuitions already in the body. Others need to be taught, but once learned, they become automatic. The question of how to balance these two registers is essentially a UX question. How much can you offload to instinct, and how much do you ask the user to learn?

Figure 3: vibration vocabulary

What this points toward

Wearable technology is still mostly preoccupied with replicating what screens do, just closer to the body. Notifications. Step counts. Text previews. The assumption is that information needs to be read, not felt.

But the body is an extraordinary receiver. We can perceive vibration across a surprisingly wide frequency range. We can distinguish location, intensity, rhythm, and direction simultaneously. We can learn, through repetition, to attach meaning to patterns that initially mean nothing. The work by Eagleman, Bach-y-Rita, and others suggests the ceiling here is much higher than current devices are reaching for.

Navigation is just one application. Assistive technology is another. So is spatial awareness, athletic feedback, or any context where looking away carries a cost. The design space is genuinely wide.

What I took from this project is that the interesting work isn't in the hardware, it's in the vocabulary. Which patterns are learnable? Which are instinctive? What makes a vibration feel urgent versus ambient? Where does a signal cross from noise into meaning?

And most importantly, how might we utilise neuroplasticity in developing human-computer interactions?

Reference

Bach-y-Rita, P., 1972. Brain Mechanisms in Sensory Substitution. New York: Academic Press.

Eagleman, D. (2020) Livewired: The Inside Story of the Ever-Changing Brain. London: Canongate Books.

Heyer, C. (2018). Designing for coping. Interacting with Computers, 30(6), 492–506. https://doi.org/10.1093/iwc/iwy025